I've added some sanity checks my common Github Actions when I build Docker containers to run the -h of a tool after building the image. I've a couple times been bitten by shared lib versions across build vs runtime base images. This at least verifies that the binary is in place and works!

- name: Build

uses: docker/build-push-action@v6

with:

platforms: ${{ inputs.docker_platforms }}

context: ${{ inputs.context }}

cache-from: type=gha

cache-to: type=gha,mode=max

load: true

tags: local-build:${{ github.sha }}

push: false

- name: Check Container

if: inputs.check_command != ''

run: |

docker run local-build:${{ github.sha }} ${{ inputs.check_command }}I'm running into this issue with my local Docker Registry where things seem corrupted after a garbage collect. I though it was how I was deleting multi-arch images, but maybe not! I'm just disabling garbage collection for now in my system.

I'd love to try out Oriole DB for the decoupled storage for running Postgres. Keeping all data on S3/Minio would ease my DB management for disks and remove any reliance on a remote filesystems for Postgres.

The current limitation of While OrioleDB tables and materialized views are stored incrementally in the S3 bucket, the history is kept forever. There is currently no mechanism to safely remove the old data. prevents me from running it fully though. I'll have to keep an eye on the dev / next release.

Haven’t heard of sigstore before. Need to keep this list around for next time I see someone recommending PGP

With 0.0.9 of declare_schema I'm starting to fail if migrations cannot be run. At the moment this tends to be room for improvement on implementation vs limitations in Postgres.

Current limitations:

- Cannot modify table constraints, as of 17, only foreign keys can be anyway

- Cannot modify indexes. I'd like to improve this but it's also super messy. Index creation can take a while. I'm not sure how I'd like to handle this so the method at the moment is now to create a new index an later drop the old one.

I'm starting to get fairly confident in my usage of it. Going forward I hope to work more on docs and examples.

I've been meaning to try out this IdType trait pattern. My SQLx usage so far somewhat benefits from different structs for to-write-data and read-data so I haven't quite gotten around to testing it out.

via

Some of my projects recently failed during lockfile updates with:

error "OPENSSL_API_COMPAT expresses an impossible API compatibility level"The lockfile updates included openssl-sys-0.9.104. I remember not really needing OpenSSL at all, leaning to use rust-tls for the most part. This was the push I needed to figure out why OpenSSL was still being included.

reqwest includes default-tls as a default feature, which seems to be native-tls a.k.a OpenSSL. Removing default features worked for my projects

reqwest = { version = "0.12.4", features = ["rustls-tls", "json"], default-features = false }While upgrading to Postgres 17 I ran into a few problems in my setup:

- I didn't update

pg_dumpas well, so backups stopped for a few days pg_dumpfor Postgres 17 (in some conditions? at least my setup) requires ALPN with TLS.

From the release notes:

Allow TLS connections without requiring a network round-trip negotiation (Greg Stark, Heikki Linnakangas, Peter Eisentraut, Michael Paquier, Daniel Gustafsson)

This is enabled with the client-side option sslnegotiation=direct, requires ALPN, and only works on PostgreSQL 17 and later servers.I run Traefik to proxy Postgres connections, taking advantage of TLS SNI so a single Postgres port can be opened in Traefik and it will route the connection to the appropriate Postgres instance. Traefik ... understandly... doesn't default to advertising that it supports postgresql service over TLS. This must be done explicitly.

In Traefik I was setting logs such as tls: client requested unsupported application protocols ([postgresql])

From pg_dump the log was SSL error: tlsv1 alert no application protocol "postgres"

Fixing this required configuring Traefik to explicitly say postgresql was supported.

# Dynamic configuration

[tls.options]

[tls.options.default]

alpnProtocols = ["http/1.1", "h2", "postgresql"]This as documented, is dynamic configuration. It must go in a dynamic config file declaration, not the static. In my static config I needed to add

[providers]

[providers.file]

directory = "/local/dynamic"

watch = trueWhere /local/dynamic is a dir that contains dynamic configuration. I was unable to get the alpnProtocols set with Nomad dynamic configuration. I always ran into invalid node options: string when Traefik tried to load the config from Consul. Maybe from this

Pleased again with SQLx tests while adding tests against Postgres to test migrations. Previously there wasn't any automated tests for "what does this lib pull from Postgres", I was doing that manually.

#[sqlx::test]

fn test_drop_foreign_key_constraint(pool: PgPool) {

crate::migrate_from_string(

r#"

CREATE TABLE items (id uuid NOT NULL, PRIMARY KEY(id));

CREATE TABLE test (id uuid, CONSTRAINT fk_id FOREIGN KEY(id) REFERENCES items(id))"#,

&pool,

)

.await

.expect("Setup");

let m = crate::generate_migrations_from_string(

r#"

CREATE TABLE items (id uuid NOT NULL, PRIMARY KEY(id));

CREATE TABLE test (id uuid)"#,

&pool,

)

.await

.expect("Migrate");

let alter = vec![r#"ALTER TABLE test DROP CONSTRAINT fk_id CASCADE"#];

assert_eq!(m, alter);Really impressed with SQLx testing. Super simple to create tests that use the DB in a way that works well in dev and CI environments. I'm not using the migration feature but instead have my own setup to get the DB into the right state at the beginning of tests.

Half of how I use the web is with RSS. I can't really imagine having to go back to finding new things on various pages to see what's new.

Making a pr to SQLx to add Postgres lquery arrays. This took less time than I expected to try and fix. More time was spent wrangling my various projects to use a local sqlx dependency.

Postgres ltree has a wonderful ? operator that will check an array of lquerys. I plan to use this to allow filtering multiple labels in my expense tracker.

ltree ? lquery[] → boolean

lquery[] ? ltree → boolean

Does ltree match any lquery in array?I'm trying to figure out if I can create Service Accounts in Kanidm and get a JWT that will work with pREST. pREST can be configured to use a .well-known URL to pull a JWK. This would allow me to give a long-lived service account API key to each service and keep token generation out of my services.

It looks like not yet! But they seem to be aware of this use case.

ActivityPub is definitely "faster" for conversations for seeing and responding to replies. That in many ways is not be a good thing.

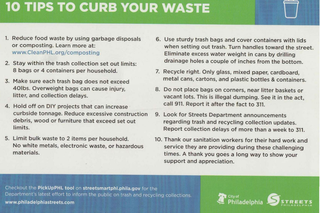

Similar to previous Glitter Litter, the Streets Department is getting in on the action. This card was in our handrail, and many copies like it littered the street. A+

Teable looks interesting but doesn't seems to call out what is limited without the enterprise version.

2 projects listed in This Week in Self-Hosted (27 September 2024): AirTrail and Hoarder. I don't use either, but good to see more self-host stuff adding OIDC!

Giving Mathesar a try for a "life CMS". I loved Notion databases for easily creating structured data and linking between items and have been trying various things to replace it. Maybe this will be it! I like that it's specifically Postgres (as that's most of my homelab). It's missing a good mobile interface and OIDC though.

I spent 90 minutes dehulling soybeans by hand. Wish I tried the food processor